In his December, 2007 Techlog column, PC World Editor in Chief Harry McCracken wrote,

I should add that this and next month’s newsletters are by no means a comprehensive overview of the possible future of the web. There are plenty of general themes and specific technologies that I won’t cover, and among those that I do cover, probably some disagreement on the finer points. I welcome your feedback, suggestions, and corrections!

When social networks first began to pick up speed, I spent a decent amount of time wondering if they would ultimately kill the website. At the time, it seemed possible. After all, if a business could have a functional web presence within a larger pool of interested people and not have to worry about laborious design and development costs, that would be appealing, right? To a large extent, this is what happened for individuals: Many people I know who once maintained a personal website now focus primarily on a social media platform instead. And for businesses, social networks do serve as wonderful outposts for receiving and qualifying users. Once they’ve done that, though, the user is looking for a level of informational sophistication and specificity that a social network can’t deliver as well as a dedicated custom website can. Because of that, it turns out that social media actually ended up having the opposite effect from what was predicted. The more popular social networks like Facebook, LinkedIn, Twitter or MySpace have gotten, the more important it has been for businesses to have a stellar website to refer people to.

Websites are Still Command Central

While this idea of website centrality is quite obvious for business to consumer ecommerce companies (e.g. Amazon.com, Nike.com, or Apple.com), it’s not so obvious for business to business service-based companies. Companies in this space are primarily concerned with using the website as a marketing tool to generate and nurture leads, in addition to serving existing customers. This requires a sophisticated website that can process incoming user data as well as integrate with all kinds of external platforms, ranging from newsletter tools to CRMs to social media tools. So while the website may depend upon outposts to deliver people, it then must be capable of operating as a “central command,” synthesizing data from many sources and controlling the flow of information in and out on behalf of the company that maintains it.

What I’ve just described is the paradigm of contemporary web marketing. From the point of view of your firm, your website should be at the center of the web, with the concentric pattern radiating out from it representing outgoing flow of the content you create and the incoming flow of user traffic and discussion. The trends that we’ll explore in this newsletter and its continuation next month, which range from the mainstreaming of social media to legal issues of privacy, all affirm the continuing centrality of your website.

With shockingly high adoption rates and history-altering uses by massive groups of people, it’s clear that the social media paradigm is quite mainstream. Indeed, we are in the midst of what will be looked back upon as a very tumultuous period of social and economic change during which our global society was radically shaped, in both conceptual and concrete ways, by communications technology. (The impact of social media on our society at large is not the focus of this newsletter, but if you’re interested in a more academic approach to that topic, I’d like to recommend author Clay Shirky’s recent TED talk, How Social Media Can Make History.) As such, it’s no surprise that social media has revolutionized businesses in almost every industry, especially ours.

Social Media and Business

Increased online social activity has presented an obvious opportunity for marketing and advertising that has yet to be fully mastered. But for businesses, establishing simple and effective social media outposts can quickly bring local advantages to global operations. What I mean by this is that actual personal relationships are just as valuable to a firm serving clients all over the planet as they are to small, local businesses. Using social networks can foster real relationships that enterprises used to assume only mattered to customers that walked into the local hardware store. But I’m not sure that was ever true. I think that relationships have always mattered—it’s just that, for a time, we were able to use advertising to create the illusion of relationships (think about the celebrity endorsement model of commercials). Now that real relationships are possible, the illusion is just not going to cut it anymore.

The more people use social media to speak their mind about experiences with products and services, the more businesses will benefit or suffer from the unsolicited opinions of individuals. The only difference between this and true word-of-mouth is that these opinions persist and can become very sticky. They are posted to blogs, forums, tweeted, texted, etc. and instantly visible to potentially thousands of people. Moreover, this information remains visible until it is buried by the daily onslaught of other commentary- that is, unless it attracts attention. Then it gets sticky and spreads faster than any old-school PR maven could control. With satisfied customers, this is a good thing, but in cases of customer dissatisfaction, it could be disastrous. In last October’s newsletter, I discussed how understanding this new system is critical to managing your online reputation and recommended some tools and techniques to make that a part of your routine. I’d recommend reading it for more on how social media is reshaping business public relations. More recently, I wrote A Practical Guide to Social Media to evaluate business use of social networks for marketing.

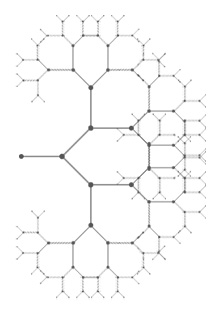

But the shifts I’ve described above are old news at this point; we already observe and understand them well. What is more interesting to consider is what I like to call the “fractalization” of the web. The connections across the web, whether personal, professional, or other, are growing in number and granularity, making the passage of information faster than we can even begin to comprehend. It used to be that two websites might be connected initially by a single, mutually agreed upon link- “I’ll link to you if you link to me.” This kind of connection might look like a simple, straight line between two points. Then, portals were manually set up to organize websites by category. If someone working on the portal found your site, she might add it herself, or you could submit your link to the portal to be entered. If the portal was a point, you could now imagine many straight lines radiating out from it and connecting to other points. Once Google innovated an algorithmic approach to indexing the content of the web, the portal became obsolete, and the vast number of connections was made even more clear. This mastery over the web enabled Google to become one of the most quickly expanding and profitable companies in history. But an interesting shift is occurring now as a result of social media. Consider this quote from an article by Fred Vogelstein in this month’s issue of Wired, entitled The Great Wall of Facebook: The Social Network’s Plan to Dominate the Internet

:

“In the eight months ending in April, Facebook has doubled in size to 200 million members, who contribute 4 billion pieces of info, 850 million photos, and 8 million videos every month. The result: a second Internet, one that includes users’ most personal data and resides entirely on Facebook’s servers.”

What Vogelstein is hinting at here is that a massive amount of data and connections, potentially larger than the web itself, is growing outside of the reach of Google’s spiders. As long as that remains true- both the exponential growth of data and its exclusivity to social networks- Google’s dominance over the web and how we use it is at serious risk (probably even despite their upcoming “social” offering, Google Wave). Because Facebook, for example, keeps its data from being indexed by Google, the most numerous and granular connections, those which manifest the detailed tips of the fractal image I’m using, are not visible or navigable without participation in social media. This is a significant paradigm shift from the robotic to the personal, which could ultimately render search engine optimization obsolete, especially if Facebook gets its way. A bit more from Vogelstein:

“Today, the Google-Facebook rivalry isn’t just going strong, it has evolved into a full-blown battle over the future of the Internet—its structure, design, and utility. For the last decade or so, the Web has been defined by Google’s algorithms—rigorous and efficient equations that parse practically every byte of online activity to build a dispassionate atlas of the online world. Facebook CEO Mark Zuckerberg envisions a more personalized, humanized Web, where our network of friends, colleagues, peers, and family is our primary source of information, just as it is offline. In Zuckerberg’s vision, users will query this “social graph” to find a doctor, the best camera, or someone to hire—rather than tapping the cold mathematics of a Google search. It is a complete rethinking of how we navigate the online world, one that places Facebook right at the center. In other words, right where Google is now.”

Vogelstein goes on to cover the struggle between Google and Facebook comprehensively, addressing not only the companies’ attempts to monetize user patterns and data accumulation, but also the ways that each has significantly directed the trajectory of how the web is used. About Google, Vogelstein writes, “It was only after it had made itself an essential part of everyone’s online life that its business path became clear—and it quickly grew to become one of the world’s most powerful and wealthy companies,” implicitly suggesting that Facebook, too, having made itself an essential part of many people’s lives, could discover a viable business plan.

Facebook’s prospective business plan depends completely upon the fractalization of the web, an increasingly tight-knit network that is an organically created mesh of the connections between individuals and the data they willingly share within the context of a trusted social network. Whether this is preferable to the more sterile and “objective” algorithmic approach of Google is a matter of opinion at the moment, which means that, for now, the choice between robots and humans is yours. However, if future users do turn en masse to social networks for the answers they currently get from Google, that will righly turn a focus on search engine optimization (SEO) to social media optimization (SMO).

The web is an ever-expanding space that can be described with many different metaphorical images. Regardless of what the web may look like, browser technology is, and will continue to be, the primary means by which we experience it. In the short term, the major players in the browser space, Internet Explorer, Firefox, Safari, and Chrome, will be updating their Javascript engines to enable some new and interesting features. In the long term, browser innovation will likely fork in two directions:

(1) The continuing refinement and expansion of functionality that will approach an operating system level of sophistication

(2) The addition of browser technology to currently non-connected objects with specific filters to send and receive contextual data accross the web.

In Part 1 of “The Future of the Web,” I’ll primarily focus on how the traditional browser will change, whereas I will focus on how mobile devices and objects will be connected to the web in Part 2.

Firefox Javascript Engine Updates

Very shortly, Firefox will be releasing an update to its software that includes a brand new Javascript engine called TraceMonkey. This new engine will provide a significant boost in speed, image editing functionality, and the ability to play videos without third-party software or codecs. Aside from the speed advances, which are always welcome, the image and video capabilities are perfect examples of how the browser is encroaching upon the operating system’s “territory.” Being able to edit images within the browser itself will replace the need for local image-editing software to be available on your machine. At this point, it’s not certain what degree of sophistication this capability will reach initially, so it would be premature to uninstall Photoshop if you have it. However, the addition of this capability is indicative of a trend in that direction.

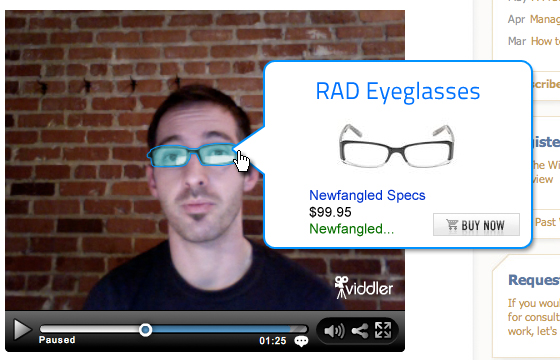

The new video functionality, which uses an open-source codec called Ogg, will open up many possibilities, the first of them being a sense of freedom for developers who may not want to license codecs. Currently, developers are required to license codecs if they want their videos to play using proprietary players like Adobe’s Flash player, but using Ogg would mean that Firefox itself could playback videos. What will excite many, though, is that the new version of Firefox will enable interactivity between applications on a web page and video. One potential application of this technology would be to allow users to actually click objects shown in a video and get additional information about them displayed along with the video playback. In the screenshot below, I’ve imagined how that functionality might look if you decided you wanted to buy the pair of glasses I’m wearing in the video from our November, 2008 newsletter, Video Just Got Easier.

Holistic Browsing

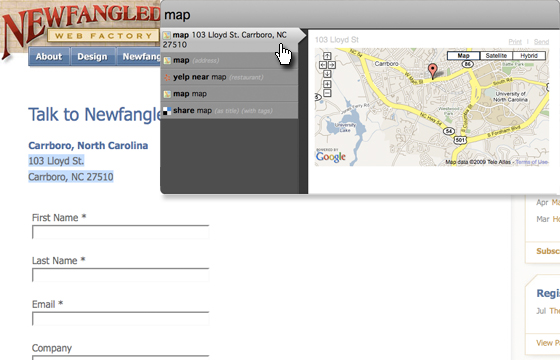

One limitation of web browsers that becomes more and more obvious as we make greater use of web applications in the cloud is the lack of usable connections between open tabs. I often find myself with many open tabs across the top of my browser, rapidly switching back and forth between Gmail, Google Calendar, Google Docs, and various social media tools, and as nitpicky as it may sound, I get annoyed that I can’t just execute specific commands without copying and pasting content from one tab to another. Mozilla is attempting to functionally connect the tools that you use in your browser in a more intuitive and rich way with Ubiquity. I’ve installed Ubiquity and find it to be a pretty interesting step in the right direction, but its command-line approach may be a barrier to entry for those unable to let go of their mouse. In the screenshot below, you can see how Ubiquity allows me to quickly map the location shown on the web page without having to open up another tab for Google Maps. However, Ubiquity’s core capability, creating a holistic browsing experience by understanding basic commands and executing with the correct web application, is certainly the direction the browser is headed. I can imagine that this approach, wedded with voice recognition software, will be the way we all navigate the web in the next decade or sooner.

In the early days, web pages primarily operated as static information providers, delivering a finite set of data to their visitors. Site administrators might update that data set on some sort of schedule, but the process would involve a manual change on their local machine and then transfer of it to the live version through a file transfer protocol (FTP). Other than this kind of scheduled update, the site remained the same. In terms of receiving user data, the initial scheme was just as primitive: hit counters, “mailto” links, basic contact forms, etc. would allow a site administrator to get basic feedback.

Today’s Websites are Synthesizers

Today, however, database driven web sites with content management systems enable a much more flexible and sophisticated delivery of information. Additionally, the use of advanced tracking tools, CRM integration, and analytics tools has become standard procedure for receiving data; among those sources, most sites receive more data then they can reasonably sort through and process. Stop and consider for a moment how important this shift has been- websites are no longer only concerned with their own data, but also with receiving and synthesizing data from other sources. This is significant, and another reason why corporate websites will remain “command central” for marketing. But now that we have loaded ourselves up with so much data, we need a much better way to efficiently sort through all of it and extract the specific answers we need about how our site is performing.

Huge progress has of course been made in this area thanks to tools like Google Analytics, which, in my opinion, is the most valuable application that Google has created so far. Ever since we started using it, we’ve wanted to mix its data with the tracking data we collect through our CMS, which would allow us to have a central location to build and pull custom reports about our site. I’m certain that this is something that anyone working with analytics, tracking, and CRM data sources would want, too. Google recently made that possible by releasing a developer API for its analytics tool. In fact, we’re using this API to build the newest version of our CMS with many new features, including real-time reporting that merges analytics data with our own tracking system. The result is a powerful dashboard that allows site admins to quickly toggle between a big-picture view of their site activity and a more granular view of individual lead activity or keyword performance. In the image below, I’m showing you a sneak preview of how our CMS will deliver a page-specific consolidated overview of tracking and analytics data in real-time. Incidentally, it’s also a sneak preview of an upcoming redesign of our site… more on that soon. On the back-end, users will have a detailed dashboard providing the same kind of data site wide. Trust me, this is going to be incredible.

Conversational Synthesis

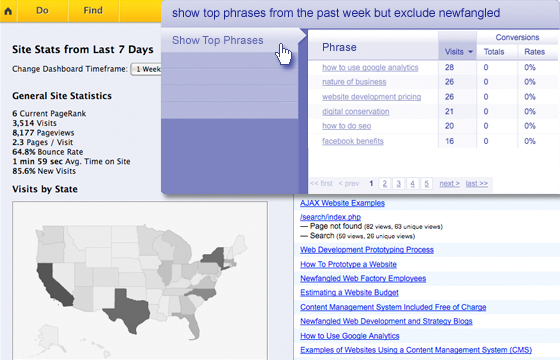

We’re not the only ones building a dashboard tool like the one I described—after all, it’s not so much a novel idea as it is a need that we all are experiencing simultaneously. Nor am I likely the only one envisioning the next step, what I like to call “conversational synthesis.” What if, rather than relying upon a pre-configured dashboard, you could easily query your site using natural language and then save that query as a routine operation? This would be an incredible mashup of intuitive Ubiquity-like functionality and the speed and scope of our own updated dashboard. But more than that, it would be a conversational, and thus more human, approach to reporting and measurement. I see this as an essential and inevitable development since the biggest barrier to good web analytics and data measurement right now is the fact that many people faced with that responsibility are simply squeamish about numbers. Conversational synthesis wouldn’t remove the numbers, of course, but it would enable people to engage with their data in depth, regardless of whether they consider themselves “numbers people,” by replacing numeric operations with verbal commands. I imagine what this might look like in the screenshot below:

Just like my expectation for browsers, I can envision merging a tool like this with voice recognition software, allowing it to operate on spoken command. Now that would be truly conversational!