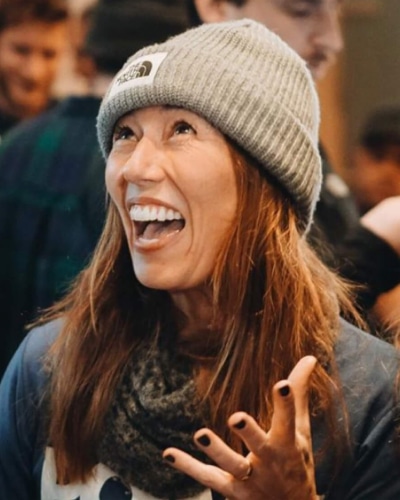

Testimonial

Newfangled's deep knowledge of prospect experience and their guidance on best practices helped us to create a site that’s not just inspiring, but that also motivates people to take action. Even better, they made the whole process smooth, easy, and even fun.

Kim Grob

Founding Partner,

Right On